Building Trust Through Engineered Objectivity

In today’s complex regulatory landscape and activist-oriented attacks on science, SciPinion was established to build trust and maintain credibility through our Certified Peer Reviews.

Our goal is to introduce clarity and certainty from the expert community to the world’s toughest science problems — instilling universal trust in science.

A Structured Path to Defensible Conclusions

Every SciPinion engagement follows a defined methodology — from expert recruitment through final reporting. The structure isn’t bureaucracy; it’s what makes the findings reproducible and the conclusions defensible.

Independent Panels, Consistent Results

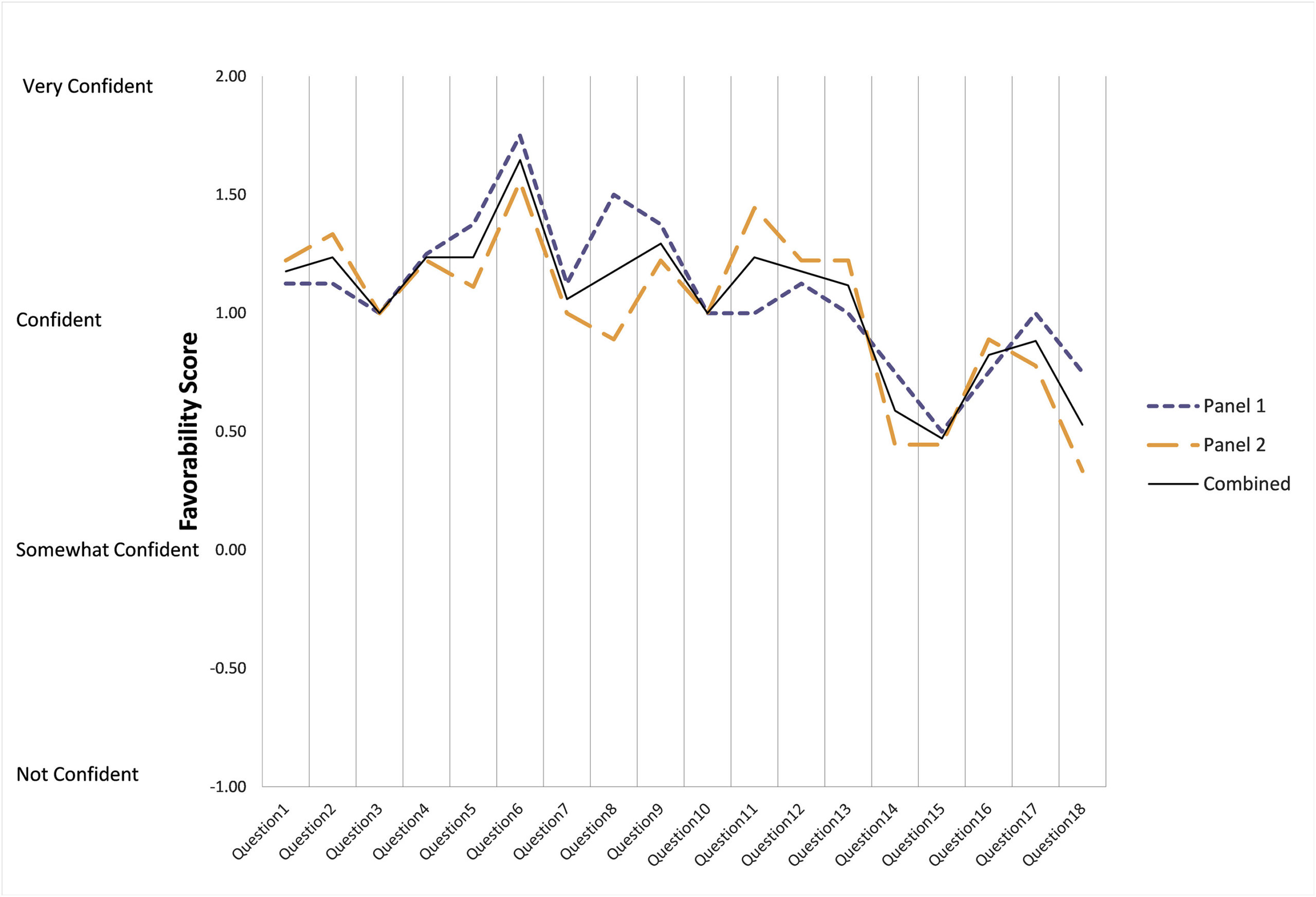

When two independent panels review the same materials using our methodology, their findings align. The graph shows favorability scores from Panel 1 and Panel 2 across 18 charge questions — the convergence demonstrates that our approach yields reproducible outcomes.

No other company or government body has tested or proven their approach to assembling and conducting peer review panels yields reproducible results.

Three Phases of Every Engagement

A SciPi is defined as a collection of Scientific oPinions. Each panel engagement involves three phases, with design options tailored to project objectives.

Pool of Ideal Reviewers

The ideal reviewer sits at the intersection of four criteria. Our recruitment process identifies experts who meet all requirements.

Why Traditional Panels Fail

Face-to-face deliberations suffer from well-documented cognitive biases. Our methodology addresses these failure points by design.

Groupthink

Social pressure drives conformity around incorrect positions.

Deference

Panelists defer to perceived authority regardless of argument quality.

Amplification

Early opinions get reinforced, drowning out alternatives.

Overbearing Members

Dominant personalities silence quieter experts.

Why We Do Things The Way We Do

Panel Selection

When we select a panel of experts for a peer review, we use an objective and quantitative metric of expertise—and a model picks the panel. This process is completely objective, quantitative, transparent, and reproducible.

We do not assume or make any prejudgments about what the opinions of any particular expert will be. If a sponsor desires or requires certain diversity in demographics (region of residence, gender, sector of employment), the model can account for those requirements without compromising objectivity.

Panel Engagement

All engagements with experts occur online through the SciPinion web app. We do this to eliminate the negative influences that occur with face-to-face meetings. While working online, each expert’s identity is not disclosed—they are labeled sequentially as Expert 1, Expert 2, and so on.

This eliminates undue influence from recognizing peers and affords greater psychological safety.

Upon completion, expert identities can be disclosed via three options: experts are identified but a key is not provided (preferred), experts are identified with a key provided, or—in rare cases involving highly controversial topics—experts may remain anonymous in perpetuity.

Trusted by Government Agencies

SciPinion is trusted by government agencies including Health Canada, the Centers for Disease Control and Prevention (CDC), and the U.S. EPA for rigorous, transparent scientific evaluations.

“The U.S. Environmental Protection Agency (EPA) acknowledges that SciPinion’s peer review process meets or exceeds the EPA’s FIFRA Science Advisory Panel (SAP) process in every point of comparison.”

— EPA Report No. 22-E-0053

Let’s Discuss Your Science

Tell us about your scientific question and we’ll help you determine the right approach — whether that’s a certified peer review panel, expert survey, or targeted consultation.